Hello guys!

so i just rebuild my system in a SFF case and finally want to put some work into properly configuring my fan curves.

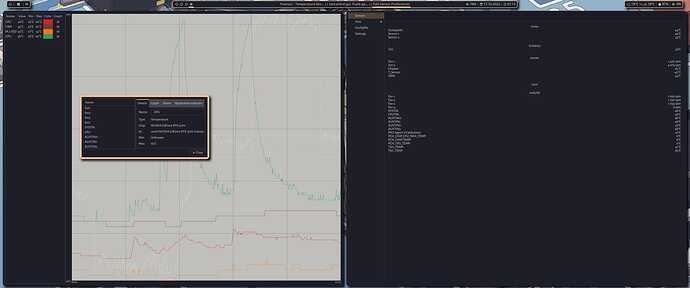

For that i use fancontrol and set it up via the GUI.

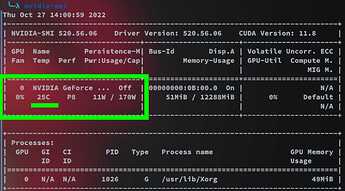

I also use psensors to graph my temps while benchmarking, gaming and using stable-diffusion. In psensor i can see my NVidia GPU; Its ID is nvctrl NVIDIA GeForce RTX 3070 0 temp but in Fancontrol i sadly cannot let this sensor control my case fans. Because in 99% of cases, my GPU will be the workhorse in my PC, i want all my fans to ramp up when the GPU is in heavy use.

As of right now i can chose from a plethora of sensors to control my fans, but not the GPU sensor.

Any sensor-detect didn't find it, and i ran every single detection...Any idea how i could manually add it?

Thanks!

obligatory inxi i guess^^

System:

Kernel: 6.0.1-zen2-1-zen arch: x86_64 bits: 64 compiler: gcc v: 12.2.0

parameters: BOOT_IMAGE=/@/boot/vmlinuz-linux-zen

root=UUID=c3ef0d61-a4ef-469b-96b1-b7a7583d021e rw rootflags=subvol=@

quiet quiet splash rd.udev.log_priority=3 vt.global_cursor_default=0

loglevel=3

Desktop: KDE Plasma v: 5.26.0 tk: Qt v: 5.15.6 info: latte-dock, polybar

wm: kwin_x11 vt: 1 dm: SDDM Distro: Garuda Linux base: Arch Linux

Machine:

Type: Desktop Mobo: ASUSTeK model: ROG STRIX X570-I GAMING v: Rev X.0x

serial: <superuser required> BIOS: American Megatrends v: 4204

date: 02/24/2022

CPU:

Info: model: AMD Ryzen 5 2600X bits: 64 type: MT MCP arch: Zen+ gen: 2

level: v3 note: check built: 2018-21 process: GF 12nm family: 0x17 (23)

model-id: 8 stepping: 2 microcode: 0x800820D

Topology: cpus: 1x cores: 6 tpc: 2 threads: 12 smt: enabled cache:

L1: 576 KiB desc: d-6x32 KiB; i-6x64 KiB L2: 3 MiB desc: 6x512 KiB

L3: 16 MiB desc: 2x8 MiB

Speed (MHz): avg: 4011 high: 4043 min/max: 2200/3600 boost: enabled

scaling: driver: acpi-cpufreq governor: performance cores: 1: 4042 2: 4041

3: 4042 4: 4043 5: 4042 6: 4043 7: 3681 8: 4042 9: 4042 10: 4039 11: 4042

12: 4043 bogomips: 86226

Flags: avx avx2 ht lm nx pae sse sse2 sse3 sse4_1 sse4_2 sse4a ssse3 svm

Vulnerabilities:

Type: itlb_multihit status: Not affected

Type: l1tf status: Not affected

Type: mds status: Not affected

Type: meltdown status: Not affected

Type: mmio_stale_data status: Not affected

Type: retbleed mitigation: untrained return thunk; SMT vulnerable

Type: spec_store_bypass mitigation: Speculative Store Bypass disabled via

prctl

Type: spectre_v1 mitigation: usercopy/swapgs barriers and __user pointer

sanitization

Type: spectre_v2 mitigation: Retpolines, IBPB: conditional, STIBP:

disabled, RSB filling, PBRSB-eIBRS: Not affected

Type: srbds status: Not affected

Type: tsx_async_abort status: Not affected

Graphics:

Device-1: NVIDIA GA104 [GeForce RTX 3070] vendor: ASUSTeK driver: nvidia

v: 520.56.06 alternate: nouveau,nvidia_drm non-free: 515.xx+ status: current

(as of 2022-10) arch: Ampere code: GAxxx process: TSMC n7 (7nm)

built: 2020-22 pcie: gen: 3 speed: 8 GT/s lanes: 16 link-max: gen: 4

speed: 16 GT/s bus-ID: 08:00.0 chip-ID: 10de:2484 class-ID: 0300

Device-2: Microdia USB Live camera type: USB

driver: snd-usb-audio,uvcvideo bus-ID: 5-3.3:7 chip-ID: 0c45:6537

class-ID: 0102 serial: <filter>

Display: x11 server: X.Org v: 21.1.4 with: Xwayland v: 22.1.3

compositors: 1: kwin_x11 2: Picom v: git-f70d0 driver: X: loaded: nvidia

gpu: nvidia display-ID: :0 screens: 1

Screen-1: 0 s-res: 4520x1920 s-dpi: 110 s-size: 1044x443mm (41.10x17.44")

s-diag: 1134mm (44.65")

Monitor-1: DP-0 pos: primary,top-left res: 1080x1920 hz: 60 dpi: 92

size: 299x531mm (11.77x20.91") diag: 609mm (23.99") modes: N/A

Monitor-2: DP-4 pos: primary,bottom-r res: 3440x1440 dpi: 109

size: 800x330mm (31.5x12.99") diag: 865mm (34.07") modes: N/A

OpenGL: renderer: NVIDIA GeForce RTX 3070/PCIe/SSE2 v: 4.6.0 NVIDIA

520.56.06 direct render: Yes

Audio:

Device-1: NVIDIA GA104 High Definition Audio vendor: ASUSTeK

driver: snd_hda_intel bus-ID: 5-3.3:7 v: kernel pcie: chip-ID: 0c45:6537

gen: 3 class-ID: 0102 speed: 8 GT/s serial: <filter> lanes: 16 link-max:

gen: 4 speed: 16 GT/s bus-ID: 08:00.1 chip-ID: 10de:228b class-ID: 0403

Device-2: AMD Family 17h HD Audio vendor: ASUSTeK driver: snd_hda_intel

v: kernel pcie: gen: 3 speed: 8 GT/s lanes: 16 bus-ID: 0a:00.3

chip-ID: 1022:1457 class-ID: 0403

Device-3: Microdia USB Live camera type: USB

driver: snd-usb-audio,uvcvideo

Device-4: C-Media TONOR TC30 Audio Device type: USB

driver: hid-generic,snd-usb-audio,usbhid bus-ID: 5-3.4:9 chip-ID: 0d8c:0134

class-ID: 0300 serial: <filter>

Sound API: ALSA v: k6.0.1-zen2-1-zen running: yes

Sound Interface: sndio v: N/A running: no

Sound Server-1: PulseAudio v: 16.1 running: no

Sound Server-2: PipeWire v: 0.3.59 running: yes

Network:

Device-1: Intel I211 Gigabit Network vendor: ASUSTeK driver: igb v: kernel

pcie: gen: 1 speed: 2.5 GT/s lanes: 1 port: f000 bus-ID: 04:00.0

chip-ID: 8086:1539 class-ID: 0200

IF: enp4s0 state: up speed: 1000 Mbps duplex: full mac: <filter>

IF-ID-1: anbox0 state: down mac: <filter>

Bluetooth:

Device-1: Intel AX200 Bluetooth type: USB driver: btusb v: 0.8

bus-ID: 3-6:3 chip-ID: 8087:0029 class-ID: e001

Report: bt-adapter ID: hci0 rfk-id: 0 state: up address: <filter>

Device-2: ASUSTek Broadcom BCM20702A0 Bluetooth type: USB driver: btusb

v: 0.8 bus-ID: 5-2.1.1:8 chip-ID: 0b05:17cb class-ID: fe01 serial: <filter>

Report: ID: hci1 rfk-id: 1 state: up address: N/A

Drives:

Local Storage: total: 3.44 TiB used: 1.22 TiB (35.3%)

SMART Message: Unable to run smartctl. Root privileges required.

ID-1: /dev/nvme0n1 maj-min: 259:0 vendor: Samsung model: SSD 970 EVO

250GB size: 232.89 GiB block-size: physical: 512 B logical: 512 B

speed: 31.6 Gb/s lanes: 4 type: SSD serial: <filter> rev: 1B2QEXE7

temp: 43.9 C scheme: MBR

ID-2: /dev/sda maj-min: 8:0 vendor: Samsung model: SSD 870 EVO 2TB

size: 1.82 TiB block-size: physical: 512 B logical: 512 B speed: 6.0 Gb/s

type: SSD serial: <filter> rev: 2B6Q scheme: GPT

ID-3: /dev/sdb maj-min: 8:16 vendor: Crucial model: CT1000MX500SSD1

size: 931.51 GiB block-size: physical: 4096 B logical: 512 B

speed: 6.0 Gb/s type: SSD serial: <filter> rev: 033 scheme: GPT

ID-4: /dev/sdc maj-min: 8:32 model: iSCSI Disk size: 500 GiB block-size:

physical: 16384 B logical: 512 B type: N/A serial: N/A rev: 0123

Partition:

ID-1: / raw-size: 232.88 GiB size: 232.88 GiB (100.00%) used: 158.76 GiB

(68.2%) fs: btrfs dev: /dev/nvme0n1p1 maj-min: 259:1

ID-2: /home raw-size: 232.88 GiB size: 232.88 GiB (100.00%) used: 158.76

GiB (68.2%) fs: btrfs dev: /dev/nvme0n1p1 maj-min: 259:1

ID-3: /var/log raw-size: 232.88 GiB size: 232.88 GiB (100.00%) used: 158.76

GiB (68.2%) fs: btrfs dev: /dev/nvme0n1p1 maj-min: 259:1

ID-4: /var/tmp raw-size: 232.88 GiB size: 232.88 GiB (100.00%) used: 158.76

GiB (68.2%) fs: btrfs dev: /dev/nvme0n1p1 maj-min: 259:1

Swap:

Kernel: swappiness: 133 (default 60) cache-pressure: 100 (default)

ID-1: swap-1 type: zram size: 15.53 GiB used: 11.38 GiB (73.3%)

priority: 100 dev: /dev/zram0

Sensors:

System Temperatures: cpu: 49.5 C mobo: 47.0 C gpu: nvidia temp: 59 C

Fan Speeds (RPM): fan-1: 1073 fan-2: 2039 fan-5: 1077 fan-7: 0

gpu: nvidia fan: 69%

Info:

Processes: 421 Uptime: 16h 21m wakeups: 1 Memory: 15.53 GiB used: 13.62 GiB

(87.7%) Init: systemd v: 251 default: graphical tool: systemctl

Compilers: gcc: 12.2.0 alt: 11 clang: 14.0.6 Packages: 2191 pm: pacman

pkgs: 2179 libs: 576 tools: pamac,paru pm: flatpak pkgs: 12 Shell: fish

v: 3.5.1 running-in: alacritty inxi: 3.3.22

Garuda (2.6.8-1):

System install date: 2022-09-27

Last full system update: 2022-10-16

Is partially upgraded: Yes

Relevant software: NetworkManager

Windows dual boot: <superuser required>

Snapshots: Snapper

Failed units: shadow.service systemd-networkd-wait-online.service